Marshall,

There is some fascinating work happening in this thread; they are successfully turning off AA using nothing but gameshark codes.

http://assemblergames.com/l/threads/is- ... ats.59916/

http://assemblergames.com/l/threads/is- ... ats.59916/

I imagine the answer is no, but could a similar thing be done in UltraHDMI firmware?

jrra and I actually were playing around with the same thing before we found that post. I can shed some more detail onto how the AA works and what you would gain (or not). It all happens inside the N64 and I can't see any reliable way to reverse it with UltraHDMI.

Antialiasing on N64 is happening in 2 seperate places at 2 separate times.

Case 1.

When multiple triangles/fragments are exactly adjacent to another, most of the time they will share an edge inside a single pixel. The blender inside the RDP shades the overall pixel based on what proportion of each fragment is showing. In order to do a blend, it has to read the pixels from the surrounding area out of RDRAM, modify it, and then write it back. This is called a RMW (read modify write) process and it has a deleterious effect on RAM bandwidth.

Whether this happens or not is specified by the RDP render mode which is most commonly an instruction in a display list that the RDP is executing. This displaylist could be dynamically generated by the CPU at runtime, or it could be a static blob that is loaded from cart, either uncompressed or compressed/encoded. (Most games at least compress their display assets).

So to disable or enable this, you'd need to patch all displaylists that set the AA_EN blender bit. A very tedious process that would be different for each game engine - basically extracting all the displaylists, then injecting back into the ROM and hoping it works. Very possible but very tedious.

But wait, we're not done yet - when this option is enabled, as it is most of the time, the blender ALSO saves "coverage information" alongside the visual data. This is crucial data for the other second case of doing AA, and yet again consumes precious RAM bandwidth. Fun fact: This is the only place the 9th bit of RDRAM is ever used.

The AA patches so far do not disable this first operation being done by the blender. As a result, interior polygon edges are still antialiased, and there is still a performance penalty.

Case 2.

When a polygon is rendered against an existing part of the background. The blender can't know how to blend the edges since it doesn't have a complete picture of what the result'll look like, and you can't just go blending willy nilly. So, the second stage is basically reading the coverage information the blender previously generated while drawing, and blending pixels at the time they are sent to the TV.

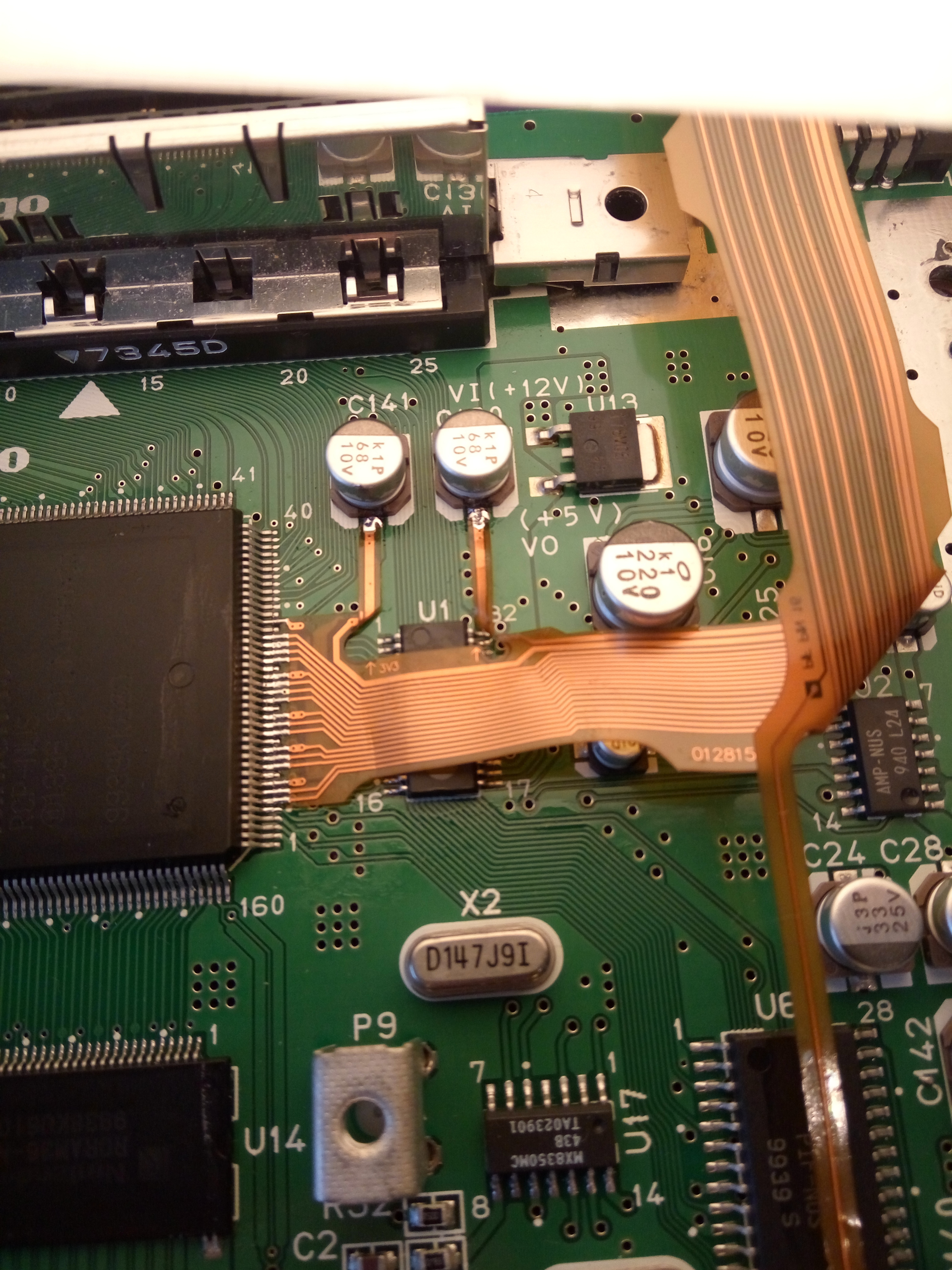

The VI (video interface) is the part of the RCP that does this - it generates the TV sync timings and does gamma boost, noise dithering, etc. As it pushes out the image to the screen, it's also looking at the coverage bits and blending pixels according to how the triangles were marked to overlap.

To blend, it must read out adjacent pixels from the buffer, then write the result back - another RMW cycle. Again, this kills ram bandwidth.

However, unlike Case 1, it's much easier to tell the VI to bypass this process - don't blend, and just output what is already in memory. This is what the Gameshark patches are doing - patching writes to the VI mode register to disable case 2 AA processing.

Performance.

Each step incurs a penalty on RAM bandwidth. Because the N64 uses a unified memory system, literally everything bangs on RDRAM constantly - CPU code, microcode, video rendering, texture fetching, video output, audio output... I believe that the single-channel Rambus system is probably the worst weak point of the N64 besides the tiny texture cache.

Every time the rambus controller has to service a new request, it has to drop what it's doing, service, and resume something else. As we all know Rambus has very good peak throughput (0.5 gigabyte/second on N64, which was very good at the time), but terrible latency. It's like using a Ferrari to drive around cramped city streets - it works, but not very well, and to really fly you have to go in a straight line without stopping.

Since many subsystems are hammering on the RAC, you want to minimize whatever you can. Disabling both steps of AA can have significant gains. Some other systems that cause problems are the RMWs incurred by using the Z-buffer (should be minimized), the divot filter, and the dither filter.

I tested with my MGC demo and found that with 640x340 framebuffers, turning on Case 1 halves throughput. Turning on Case 2 halves throughput yet again. So, in high-res with triple buffering, full AA can have a 4x speed penalty.

Many games only use double-buffering and the render time is quantized to the nearest Vsync (10hz, 15hz, 20hz, 30hz, 60hz etc) but disabling both AA cases would prevent slowdown in many games - but very difficult to implement.

Other performance hogs

Z-buffer - as before, reduces pixel fill rate by 2 to 3 times. Can't be turned off easily and most games would break without it.

Divot filter - in the VI, median filter patches 1-pixel holes left over by limitations in the AA. Has to load all adjacent pixel data with about 5-10% effect on performance. Easy to disable and the holes can be overlooked.

Dither filter - in the VI, basically smears the entire output to hide dithering. Similar performance hit. Easy to disable, but some games look downright terrible without it (Starfox)

Deflickered high-res modes - in interlaced mode, single-pixel details will flicker due to missing every other TV field. This mode reduces it by blending the field outputs with adjacent scanlines - again it requires accessing 2 scanlines just to output 1 which has a small performance hit. Easy to disable by patching all Y-scaling coefficients in game VI mode tables.

"Free" features

Gamma boost and dither - Applies nonlinear brightness curve to the output. Because the color output is only 21bits, there is a good reason to also enable gamma dither which has no performance cost - it's almost invisible yet prevents ugly mach banding.

Pictures

Here is an example of where Case 2 anti-aliasing was turned off, but the Case 1 blending is still happening. See the edge under Mario

http://retroactive.be/img/i/ra56f591b0718610475.png

Again, Case 1 blending (interior edges), but Case 2 VI blending disabled. Divot filter disabled, notice the 3 "hole "pixels in the N.

http://retroactive.be/img/i/ra56d341021b43f599f.png

All AA turned on.

http://retroactive.be/img/i/ra56d34391aaf1d7e42.png

Virtual Pool - uses high-res interlaced deflickered mode. Notice the vertical blurring.

http://retroactive.be/img/i/ra55e77c8c925b14df6.png

TL;DR: AA on N64 is a multi-step process and so far it's only easy to nix the very last step, but some AA is still being done, and there are real performance gains if you can fix both processes.

Personally, I don't mind the AA at all, especially with HDMI.